In one recent mathematics lesson, a student used AI not to get an answer, but to check whether a line of reasoning was mathematically valid. The AI responded quickly and confidently. It gave a mathematically plausible route forward. But it also shifted the task away from the question the student was actually trying to explore.

That moment may point to something important.

Much of the current debate about AI in education still focuses on whether systems can produce correct answers, helpful explanations, or improved outcomes. Those are important questions. But early classroom observations from a small research project in secondary mathematics suggest that we may not yet be paying enough attention to something else: what happens in the interaction itself.

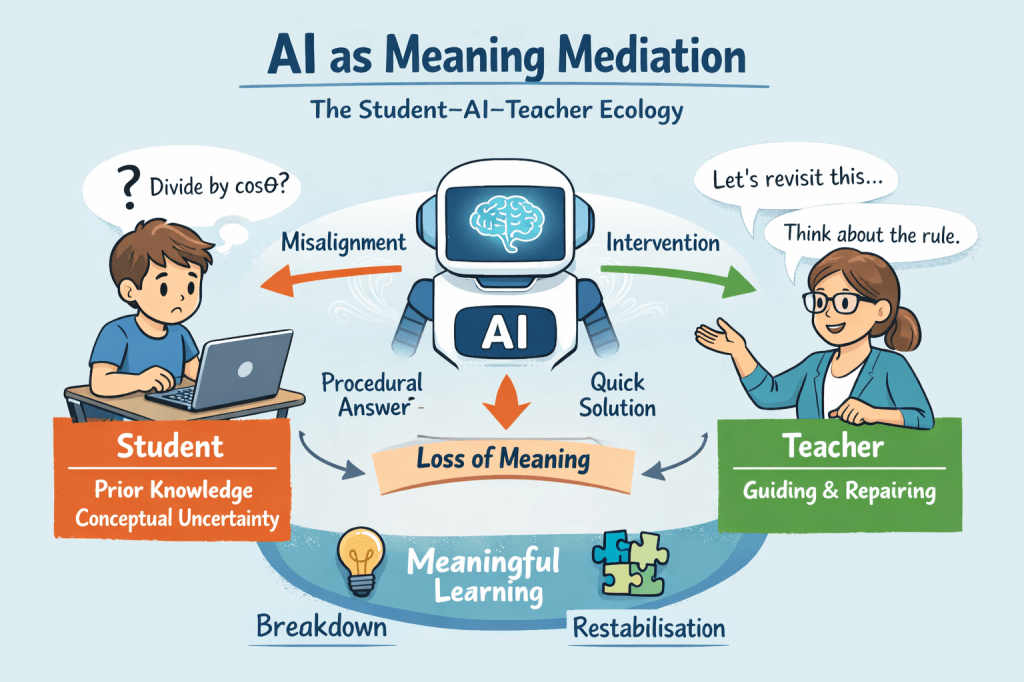

This work forms part of a small-scale research project funded by the British Educational Research Association (BERA), exploring how students interact with generative AI in secondary mathematics classrooms. What is beginning to emerge is not simply a question of whether AI is right or wrong, helpful or unhelpful. The more interesting issue may be whether students can actually make use of what the AI gives them in ways that support understanding.

What we are seeing in lessons

Across a series of early observation sessions, two broad patterns have become visible.

In some cases, students use AI as a quick source of reassurance or confirmation. It helps them begin, check, or compare. But in other cases, the interaction begins to drift. The AI offers a dense explanation, or moves too quickly toward solution, or assumes a form of reasoning that does not quite match the learner’s own.

When that happens, a familiar pattern can appear:

- the AI gives a full answer or explanation

- the student cannot fully make sense of it

- the interaction becomes overloaded or misaligned

- understanding remains fragile, even if the answer looks correct

This matters because classroom learning is not just about being shown a solution. It is about whether a learner can follow, reconstruct, and work with what is being shown.

In one lower-attaining case, a student was working with simple linear cost models. The AI gave a complete explanation, but the student struggled to connect the symbolic form of the model to the underlying idea. It was only when the teacher slowed the interaction, isolated one part of the expression, and linked it to prior knowledge that the student’s understanding began to stabilise.

In a contrasting case with a high-attaining Further Mathematics student, the issue was different. Here the student’s concern was conceptually sophisticated: when is it mathematically valid to divide by an expression that may be zero? The AI repeatedly moved the dialogue toward efficient solution rather than staying with the conceptual issue the student had raised. Again, the important educational work happened when the interaction was reopened and the distinction the student was trying to hold onto was kept in view.

These are different learners, in different parts of the mathematics curriculum, but a common issue appears in both cases: the AI can be procedurally helpful while still missing the point of the learner’s question.

Why this matters for inclusion

This is where the issue becomes especially important for inclusion.

AI can appear inclusive because it is available to many learners, often instantly, and can produce endless explanation on demand. But access is not the same thing as meaningful participation.

A student can have access to the tool and still not be able to make use of what it produces.

That seems particularly important in mathematics, where learners may differ not only in attainment but in how they interpret symbols, how much language density they can comfortably process, what prior schemas they bring to a task, and what kinds of reasoning pathways feel available to them.

For one student, an explanation may feel clear, structured, and useful. For another, the very same explanation may be too dense, too fast, too symbolic, or directed at the wrong problem.

From this point of view, inclusion is not simply about whether AI can provide support. It is about whether the interaction helps the learner build meaning in a way that remains usable to them.

That may sound obvious, but it is not how most conversations about AI in education are currently framed. Too often the question is: Did the system provide the right answer? The classroom observations suggest a different question may be more useful:

Can the learner reconstruct the meaning of what the AI has produced?

If the answer is no, then the system may be technically impressive while still being educationally excluding.

What teachers are doing that AI often is not

One of the clearest findings from these early observations is that teachers are still doing crucial interactional work that current AI systems do not do very well.

That work includes:

- slowing the interaction down

- drawing attention to the key idea in a task

- linking new explanations to something the student already knows

- allowing a student to reason in a way that makes sense to them

- reopening a conceptual issue when the dialogue has drifted too quickly toward closure

In other words, the teacher is not only explaining mathematics. The teacher is helping to make the explanation usable.

In the observed lessons, this often meant helping the student notice what the task was really asking, what part of an expression mattered most, what counted as completion, or why a method that looked efficient was not actually the right route for that learner at that moment.

This suggests that one of the most important questions for AI in mathematics education may not be whether it can replace explanation, but whether it can participate in interactions that remain educationally viable for a range of learners.

A different way of thinking about AI in maths classrooms

These observations also suggest a more constructive way of thinking about AI in mathematics learning.

Rather than treating AI simply as a source of answers or worked solutions, it may be more helpful to think about it as something that shapes the interaction around a task. Its value may depend less on what it knows in the abstract and more on how it frames, paces, and closes the dialogue.

That opens up some interesting educational possibilities.

Used well, AI might help students:

- identify what a question is really asking

- recognise what counts as a complete answer

- notice the structure of a model or expression

- move between different ways of reasoning

- build confidence to re-enter a problem without simply copying a solution

But this will not happen automatically. It depends on the quality of the interaction, on the learner’s existing understanding, and on whether the system supports or short-circuits the construction of meaning.

An emerging issue worth attending to

These are still early observations, and the work is ongoing. But they already point to something that deserves more attention.

The main educational issue may not be whether AI can generate mathematically correct output. In many cases, it clearly can. The issue may be whether learners can stay in a productive relationship with that output long enough for understanding to develop.

That is a different kind of question from the ones that usually dominate AI debates. It is less about capability in the abstract and more about classroom interaction, meaning-making, and the conditions under which learning remains possible.

If AI is going to play a growing role in mathematics education, then one of the most important research questions may be this:

Not simply whether AI works, but for whom it works, under what interactional conditions, and what kinds of learning it quietly makes more or less possible.

That feels especially important in relation to inclusion.

Because in the end, the question is not only whether AI can answer.

It is whether students can do something meaningful with the answer once it arrives.

Leave a comment